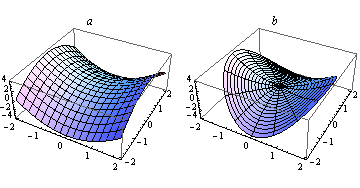

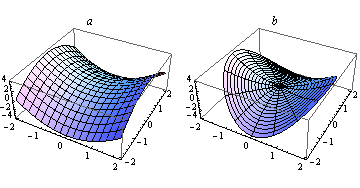

Something we frequently do in mathematics and its applications is to transform among different coordinate systems. For example, the surface in Figure 1a can be represented by the Cartesian equation

However, the same surface can also be represented in polar coordinates \left(r,\,\theta \right), by the equation

(see Figure 1b).

Figure 1: (a) the surface z=x^{2}-y^{2} in Cartesian coordinates and (b) the equivalent surface z=r^{2}\cos \,2\theta in polar coordinates

In this set of circumstances, we can think about the behaviour of z as x and y vary, or about its behaviour as r and \theta vary. It follows that the partial derivatives

exist, and so do the partial derivatives

What concerns us here is how these two sets of partial derivatives are related.

The answer comes from Taylor's theorem. Consider the surface

Suppose that we change the value of the variable r by \delta r, while holding \theta constant. In doing so, we will have to change the values of both x and y: let us say that these variables change by \delta x and \delta y respectively. Then the change in z will be equal to

But, by Taylor's theorem,

and therefore

In the limit as \delta x and \delta y tend to zero,

Similarly,

Together, these form the chain rule for partial differentiation.

The observation that

is in itself very useful: it enables us to deduce the error in z if we know the errors in x and y. For example, consider the cylinder with radius r m and height h m. It has surface area

Now, suppose we know r and h to within 5% and 1% respectively, and suppose both are measured at 3 metres. Then the error in A is given by

Thus

The relative error, as a percentage, is thus